Technology

10:38, 27-Jan-2019

Researchers say Amazon face-detection technology could have bias

CGTN

Facial-detection technology that Amazon is marketing to law enforcement often misidentifies women, particularly those with darker skin, according to researchers from MIT and the University of Toronto.

The facial recognition tool, also called Rekognition service, can be used for law enforcement tasks ranging from fighting human trafficking to finding lost children, and that just like computers, it can be a force for good in responsible hands, according to a company spokesman.

The researchers said that in their tests, Amazon's technology labeled darker-skinned women as men 31 percent of the time. Lighter-skinned women were misidentified seven percent of the time. Darker-skinned men had a one percent error rate, while lighter-skinned men had none.

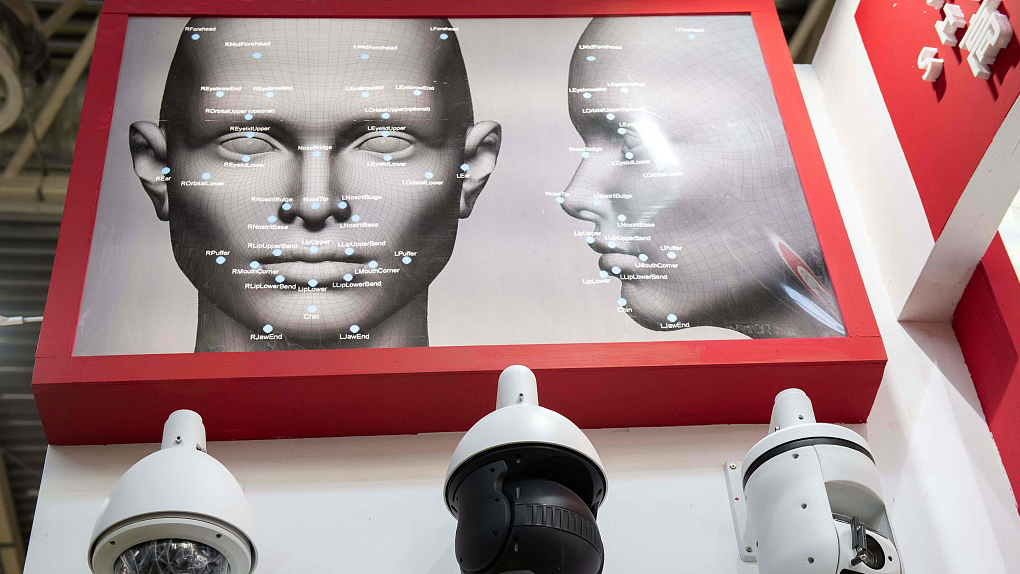

Facial recognition /VCG Photo

Facial recognition /VCG Photo

Artificial intelligence can mimic the biases of their human creators as they make their way into everyday life. The new study, released late Thursday, warns of the potential of abuse and threats to privacy and civil liberties from facial-detection technology.

Matt Wood, general manager of artificial intelligence with Amazon's cloud-computing unit, said the study uses a "facial analysis" and not "facial recognition" technology.

Wood said facial analysis "can spot faces in videos or images and assign generic attributes such as wearing glasses; recognition is a different technique by which an individual face is matched to faces in videos and images."

Amazon's reaction shows that it isn't taking the "really grave concerns revealed by this study seriously," said Jacob Snow, an attorney with the American Civil Liberties Union (ACLU).

Early this year, some Amazon investors side with ACLU urging the tech giant not to sell a powerful face recognition tool to police.

ACLU is leading the effort against Amazon's Rekognition product, delivering a petition with 152,000 signatures to the company's Seattle headquarters weeks ago, telling the company to "cancel this order."

Some Amazon investors have also asked the company to stop out of fear that it makes Amazon vulnerable to lawsuits.

Researchers from the University of Toronto indicated that Microsoft and IBM had also been discovered with similar problems in a May 2017 study, but improvements were made in their technologies according to both companies.

The second study, which included Amazon, was done in August 2018. Their paper will be presented on Monday at an artificial intelligence conference in Honolulu.

Wood also said Amazon has updated its technology since the study and done its own analysis with "zero false positive matches."

Amazon's website credits Rekognition for helping the Washington County Sheriff Office in Oregon speed up how long it took to identify suspects from hundreds of thousands of photo records.

Source(s): AP

SITEMAP

Copyright © 2018 CGTN. Beijing ICP prepared NO.16065310-3

Copyright © 2018 CGTN. Beijing ICP prepared NO.16065310-3